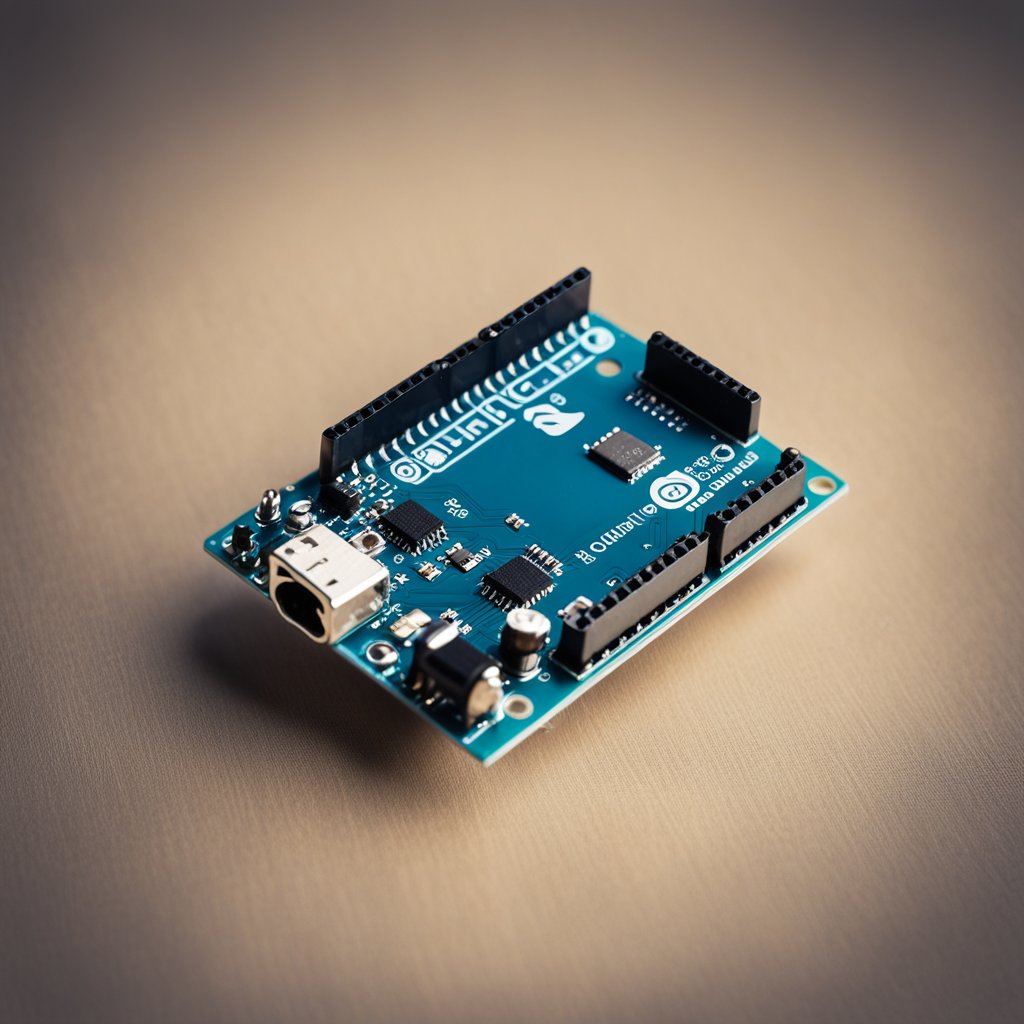

Welcome to:

Project Allusion.

Your resource to learn Arduino projects, tips & tricks, and read the electronics & gadget news.

years of experience

about us

The united way to find specialist services

“Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore.”

Nisi porta lorem mollis aliquam ut. Cras adipiscing enim eu turpis. Posuere ac ut consequat semper viverra nam libero justo. Integer eget aliquet nibh praesent tristique.

or Call +123 4561 5523

our services

What we offer

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Ut elit tellus, luctus nec ullamcorper mattis, pulvinar dapibus leo. Sit amet massa vitae tortor.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Lacus sed viverra tellus in hac habitasse dictumst. Nisi porta lorem mollis. Posuere ac ut consequat semper viverra nam libero justo.

Why Choose Us

Our most popular Tutorials

Ultrices eros in cursus turpis massa tincidunt dui ut. Turpis in eu mi bibendum neque egestas. Sollicitudin ac orci phasellus egestas tellus rutrum tellus. Eu consequat ac felis donec.

Tincidunt vitae semper quis lectus. Sed ullamcorper morbi tincidunt ornare massa eget egestas. Quis eleifend quam adipiscing vitae proin sagittis nisl rhoncus mattis. Egestas sed sed risus pretium quam vulputate dignissim.

work progress

We make life easier

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Ut elit tellus, luctus nec mattis dapibus.

1.

Read our Starter Guides

Lorem ipsum dolor sit amet, consectetur adipiscing elit.

2.

Ask Questions to Our Expert

Lorem ipsum dolor sit amet, consectetur adipiscing elit.

3.

Build Your Own Projects

Lorem ipsum dolor sit amet, consectetur adipiscing elit.

Who We Are

Our Story

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Ut elit tellus, luctus nec ullamcorper mattis, pulvinar dapibus leo. Nisi porta lorem mollis aliquam ut. Posuere ac ut consequat semper viverra nam libero justo. Integer eget aliquet nibh praesent tristique magna sit amet purus.

happy clients

“Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua.”

mack McGuire

Miami, FL

“Tincidunt lobortis feugiat vivamus at augue eget arcu dictum varius. Nunc aliquet bibendum enim facilisis gravida neque convallis.”

ellyn garzon

Santa Cruz, CA

testimonials

What our clients says about us

Nisi porta lorem mollis aliquam ut. Cras adipiscing enim eu turpis. Posuere ac ut consequat semper viverra nam libero justo praesent tristique.